Gemma 4 Gets Multi-Token Prediction Drafters: 3x Faster Inference, Same Outputs

Lead — On May 5, 2026, Google released Multi-Token Prediction (MTP) drafters for the Gemma 4 family of open models. The drafters use speculative decoding to deliver up to a 3x speedup in inference latency without changing model outputs, and they ship under the same Apache 2.0 license as the underlying models.

Advanced

What Was Released

Google DeepMind shipped MTP drafter models paired with four Gemma 4 variants: the 31B dense flagship, the 26B A4B Mixture-of-Experts model, and the on-device E2B and E4B edge models. Each drafter is published as a standalone checkpoint on Hugging Face and Kaggle, and the runtimes that already serve Gemma 4 — Hugging Face Transformers, MLX, vLLM, SGLang, Ollama, and LiteRT-LM — pick up the drafters with minimal configuration. Mobile users can try the E-series drafters directly through Google AI Edge Gallery on Android and iOS.

Importantly, the release is a follow-up rather than a re-release. Gemma 4 itself launched on April 2, 2026 without any speculative decoding assets. The drafters add a new inference path on top of the existing weights; the target models are unchanged.

How Speculative Decoding Works Here

Standard autoregressive decoding generates one token per forward pass through the full model. Speculative decoding decouples generation from verification: a small drafter proposes several candidate tokens cheaply, then the large target model verifies them all in a single parallel forward pass. If a prefix of the draft is accepted, the target emits all of those tokens in the wall-clock time of one step.

Gemma 4’s MTP drafters are not independent small models — they are tightly coupled to the target. Three architectural choices make this work:

- Shared input embeddings. The drafter reuses the target model’s embedding table instead of learning its own.

- Target-activation conditioning. The drafter concatenates the target model’s last-layer activations with token embeddings and down-projects the result, so it builds directly on representations the target has already computed.

- Shared KV cache. Drafters reuse the target’s key-value cache rather than rebuilding context, which removes the dominant prefill cost in long-context generation.

The E2B and E4B edge variants add an efficient embedder: tokens are clustered in advance, and the model first predicts a likely cluster and then restricts the final logit calculation to tokens inside it. That cuts the dominant softmax cost on small devices.

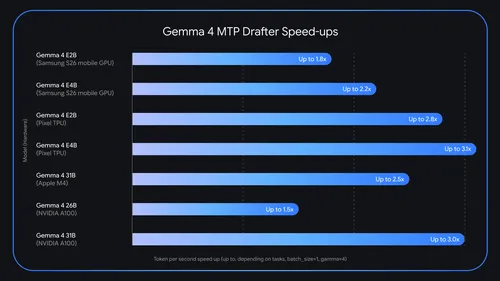

Measured Speedups

Google reports up to 3x end-to-end speedup with no quality regression — the target model still does the verification, so accepted tokens are bit-for-bit identical to greedy decoding from the target alone. The chart below shows tokens-per-second across the Gemma 4 family on representative hardware.

One caveat is worth flagging for MoE deployments. The 26B A4B target activates only a subset of experts per token, but verifying a multi-token draft can require additional experts to be loaded from memory — partially offsetting the drafting gain at batch size 1. Google measured roughly 2.2x throughput on Apple Silicon at batch sizes 4–8 for the MoE variant, and similar gains on NVIDIA A100 once batches grow. Dense and edge variants benefit even at low concurrency.

What This Means

Speculative decoding is not new — DeepMind’s original speculative sampling paper dates to 2022, and Meta’s MTP work in DeepSeek V3 and V4 popularised the multi-token-prediction training objective. What Gemma 4 ships is the first first-party, openly-licensed pairing of frontier open-weight models with purpose-built drafters that share embeddings, activations, and KV cache. For anyone running Gemma 4 in production, the upgrade path is essentially free: same model quality, same license, fewer GPU-seconds per response.

It also pressures the open-weight ecosystem. Llama, Qwen, and DeepSeek already train MTP-aware variants, but ship without official drafter checkpoints; community drafters exist but are uneven in quality. A polished Apache-2.0 drafter release sets a baseline that other vendors will likely match.

Related Coverage

- Google Releases Gemma 4: Frontier Open Models Under Apache 2.0 — the April 2, 2026 base release that today’s MTP drafters accelerate.

- Qwen3.6-27B: A Dense 27B Model That Beats a 397B MoE on Coding — the dense open-weight model Gemma 4 31B competes with most directly.

- Unveiling T5Gemma: Google’s Encoder–Decoder Gemma Models — earlier architectural experiment in the Gemma line.

Sources

- Accelerating Gemma 4: faster inference with multi-token prediction drafters — Google Blog announcement.

- Speed-up Gemma 4 with Multi-Token Prediction — Google AI for Developers technical overview.

- Gemma 4 MTP using Hugging Face Transformers — code-level usage guide.

- google/gemma-4-31B-it-assistant — drafter model card on Hugging Face.

- Gemma 4 — Google DeepMind — base model family page.

沪公网安备31011502017015号

沪公网安备31011502017015号